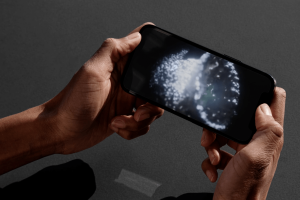

If the day hasn’t arrived yet, it’s coming: You need to talk to your child about explicit deepfakes.

The problem may have seemed abstract until fake pornographic images of Taylor Swift, generated by artificial intelligence, went viral on the social media platform X/Twitter. Now the issue simply can’t be ignored, say online child safety experts.

“When that happens to [Swift], I think kids and parents start to realize that no one is immune from this,” says Laura Ordoñez, executive editor and head of digital media and family at Common Sense Media.

Whether you’re explaining the concept of deepfakes and AI image-based abuse, talking about the pain such imagery causes victims, or helping your child develop the critical thinking skills to make ethical decisions about deepfakes, there’s a lot that parents can and should cover in ongoing conversations about the topic.

Before you get started, here’s what you need to know:

1. You don’t need to be an expert on deepfakes to talk about them.

Adam Dodge, founder of The Tech-Savvy Parent, says parents who feel like they need to thoroughly understand deepfakes in advance of a conversation with their child needn’t worry about seeming like, or becoming, an expert.

Instead, all that’s required is a basic grasp of the concept that AI-powered software and algorithms make it shockingly easy to create realistic explicit or pornographic deepfakes, and that such technology is easy to access online. In fact, children as young as elementary school students may encounter apps or software with this capability and use them to create deepfakes with little technical challenges or barriers.

“What I tell parents is, ‘Look you need to understand how early and often kids are getting exposed to this technology, that it’s happening earlier than you realize, and appreciate how dangerous it is.'”

Dodge says parents need to be prepared to address the possibilities that their child will be targeted by the technology; that they’ll view inappropriate content; or that they’ll participate in creating or sharing fake explicit images.

2. Make it a conversation, not a lecture.

If you’re sufficiently alarmed by these possibilities, try to avoid rushing into a hasty discussion of deepfakes. Instead, Ordoñez recommends bringing up the topic in an open-ended, nonjudgmental way, asking your child what they know or have heard about deepfakes.

She adds that it’s important to think of AI image-based abuse as a form of online manipulation that exists on the same spectrum as misinformation or disinformation. With that framework, reflecting on deepfakes becomes an exercise in critical thinking.

Ordoñez says that parents can help their child learn the signs that imagery has been manipulated. Though the rapid evolution of AI means some of these telltale signs no longer show up, Ordoñez says it’s still useful to point out that any deepfake (not just the explicit kind) might be identifiable through face discoloration, lighting that seems off, and blurriness where the neck and hair meet.

Parents can also learn alongside their child, says Ordoñez. This might involve reading and talking about non-explicit AI-generated fake content together, like the song Heart on My Sleeve, released in May 2023, that claimed to use AI versions of the voices of Drake and The Weeknd. While that story has relatively low stakes for children, it can prompt a meaningful conversation about how it might feel to have your voice used without your consent.

Parents might take an online quiz with their child that asks the participant to correctly identify which face is real and which is generated by AI, another low-stakes way of confronting together how easily AI-generated images can dupe the viewer.

The point of these activities is to teach your child how to start an ongoing dialogue and develop the critical thinking skills that will surely be tested as they encounter explicit deepfakes and the technology that creates them.

3. Put your kid’s curiosity about deepfakes in the right context.

While explicit deepfakes amount to digital abuse and violence against their victim, your child may not fully comprehend that. Instead, they might be curious about the technology, and even eager to try it.

Dodge says that while this is understandable, parents routinely put reasonable limits on their children’s curiosity. Alcohol, for example, is kept out of their reach. R-rated films are off limits until they reach a certain age. They aren’t permitted to drive without proper instruction and experience.

Parents should think of deepfake technology in a similar vein, says Dodge: “You don’t want to punish kids for being curious, but if they have unfiltered access to the internet and artificial intelligence, that curiosity is going to lead them down some dangerous roads.”

4. Help your child explore the consequences of deepfakes.

Children may see non-explicit deepfakes as a form of entertainment. Tweens and teens may even incorrectly believe the argument made by some: that pornographic deepfakes aren’t harmful because they’re not real.

Still, they may be persuaded to see explicit deepfakes as AI image-based abuse when the discussion incorporates concepts like consent, empathy, kindness, and bullying. Dodge says that invoking these ideas while discussing deepfakes can turn a child’s attention to the victim.

If, for example, a teen knows to ask permission before taking a physical object from a friend or classmate, the same is true for digital objects, like photos and videos posted on social media. Using those digital files to create a nude deepfake of someone else isn’t a harmless joke or experiment, but a kind of theft that can lead to deep suffering for the victim.

Similarly, Dodge says that just as a young person wouldn’t assault someone on the street out of the blue, it doesn’t align with their values to assault someone virtually.

“These victims are not fabricated or fake,” says Dodge. “These are real people.”

Women, in particular, have been targeted by technology that creates explicit deepfakes.

In general, Ordoñez says that parents can talk about what it means to be a good digital citizen, helping their child to reflect on whether it’s OK to potentially mislead people, the consequences of deepfakes, and how seeing or being a victim of the imagery might make others feel.

5. Model the behavior you want to see.

Ordoñez notes that adults, parents included, aren’t immune to eagerly participating in the latest digital trend without thinking through the implications. Take, for example, how quickly adults started making cool AI self-portraits using the Lensa app back in late 2022. Beyond the hype, there were significant concerns about privacy, user rights, and the app’s potential to steal from or displace artists.

Moments like these are an ideal time for parents to reflect on their own digital practices and model the behavior they’d like their children to adopt, says Ordoñez. When parents pause to think critically about their online choices, and share the insight of that experience with their kid, it demonstrates how they can adopt the same approach.

6. Use parental controls, but don’t bet on them.

When parents hear of the dangers that deepfakes pose, Ordoñez says they often want a “quick fix” to keep their kid away from the apps and software that deploy the technology.

Using parental controls that restrict access to certain downloads and sites is important, says Dodge. However, such controls aren’t surefire. Kids can and will find a way around these restrictions, even if they don’t realize what they’re doing.

Additionally, Dodge says a child may see deepfakes or encounter the technology at a friend’s house or someone else’s mobile device. That’s why it’s still critical to have conversations about AI image-based abuse, “even if we’re putting powerful restrictions via parental controls or taking devices away at night,” says Dodge.

7. Empower instead of scare.

The prospect of your child hurting their peer with AI image-based abuse, or becoming a victim of it themselves, is frightening. But Ordoñez warns against using scare tactics as a way of discouraging a child or teen from engaging with the technology and content.

When speaking to young girls, in particular, whose social media photos and videos might be used to generate explicit deepfakes, Ordoñez suggests talking to them about how it makes them feel to post imagery of themselves, and the potential risks. These conversations should not place any blame on girls who want to participate in social media. However, talking about risks can help girls reflect on their own privacy settings.

While there’s no guarantee a photo or video of them won’t be used against them at some point, they can feel empowered by making intentional choices about what they share.

And all adolescents and teens can benefit from knowing that encountering technology capable of making explicit deepfakes, at a developmental period when they’re highly vulnerable to making rash decisions, can lead to choices that severely harm others, says Ordoñez.

Encouraging young people to learn how to step back and ask themselves how they’re feeling before they do something like make a deepfake can make a huge difference.

“When you step back, [our kids] do have this awareness, it just needs to be empowered and supported and guided in the right direction,” Ordoñez says.

Topics

Social Good

Family & Parenting